I wrote this in February 2016, before I knew of Neuralink’s existence. It was in response to the following question:

Implants + Internet = ImplanTelepathy™!

I wrote this in February 2016, before I knew of Neuralink’s existence. It was in response to the following question:

Implants + Internet = ImplanTelepathy™!

“In our world,” said Eustace, “a star is a huge ball of flaming gas.”

“Even in your world, my son, that is not what a star is but only what it is made of…”

The Voyage of the Dawn Treader, CS Lewis

I really like the quote above, which is from the Chronicles of Narnia. It raises a neat little metaphysical question:

Why do we assume that what a thing is made up of is what a thing is?

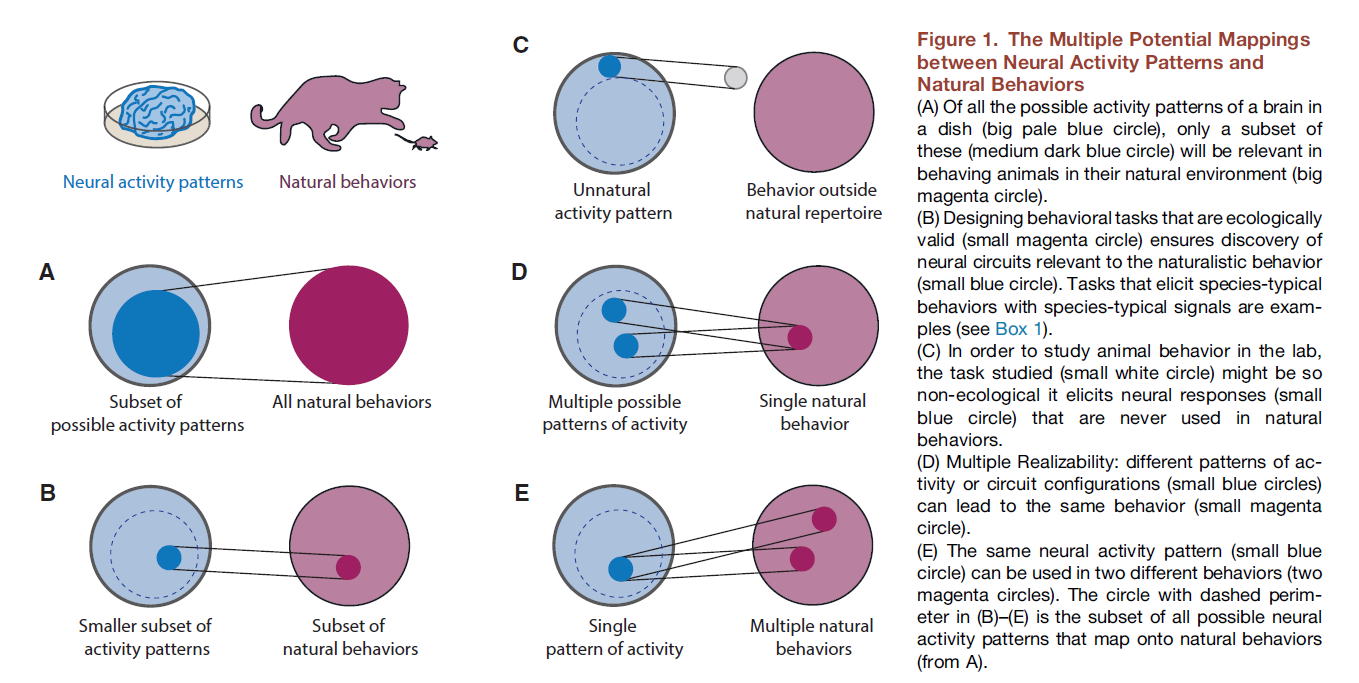

A Quora conversation led me to recent paper in Neuron that highlights a very important problem with a lot of neuroscience research: there is insufficient attention paid to the careful analysis of behavior. The paper is not quite a call to return to behaviorism, but it is an invitation to consider that the pendulum has swing too far in the opposite direction, towards ‘blind’ searches for neural correlates. The paper is a wonderful big picture critique, so I’d like to just share some excerpts.

Someone on Quora asked the following question: What’s the correlation between complexity and consciousness?

Here’s my answer:

Depends on who you ask! Both complexity and consciousness are contentious words, and mean different things to different people.

I’ll build my answer around the idea of complexity, since it’s easier to talk about scientifically (or at least mathematically) than consciousness. Half-joking comments about complexity and consciousness are to be found in italics.

I came across a nice list of measures of complexity, compiled by Seth Lloyd, a researcher from MIT, which I will structure my answer around. [pdf]

Lloyd describes measures of complexity as ways to answer three questions we might ask about a system or process:

1. Difficulty of description: Some objects are complex because they are difficult for us to describe. We frequently measure this difficulty in binary digits (bits), and also use concepts like entropy (information theory) and Kolmogorov (algorithmic) complexity. I particularly like Kolmogorov complexity. It’s a measure of the computational resources required to specify a string of characters. It’s the size of the smallest algorithm that can generate that string of letters or numbers (all of which can be converted into bits). So if you have a string like “121212121212121212121212”, it has a description in English — “12 repeated 12 times” — that is even shorter that the actual string. Not very complex. But the string “asdh41ubmzzsa4431ncjfa34” may have no description shorter than the string itself, so it will have higher Kolmogorov complexity. This measure of complexity can also give us an interesting way to talk about randomness. Loosely speaking, a random process is one whose simulation is harder to accomplish than simply watching the process unfold! Minimum message length is a related idea that also has practical applications. (It seems Kolmogorov complexity is technically uncomputable!)

Consciousness is definitely hard to describe. In fact we seem to be stuck at the description stage at the moment. Describing consciousness is so difficult that bringing in bits and algorithms seem a tad premature. (Though as we shall see, some brave scientists beg to differ.)

2. Difficulty of creation: Some objects and processes are seen as complex because they are really hard to make. Komogorov complexity could show up here too, since simulating a string can be seen both as an act of description (the code itself) and an act of creation (the output of the code). Lloyd lists the following terms that I am not really familiar with: Time Computational Complexity; Space Computational Complexity; Logical depth; Thermodynamic depth; and “Crypticity” (!?). In additional to computational difficulty, we might add other costs: energetic, monetary, psychological, social, and ecological. But perhaps then we’d be confusing the complex with the cumbersome? 🙂

Since we haven’t created a consciousness yet, and don’t know how nature accomplished it, perhaps we are forced to say that consciousness really is complex from the perspective of artificial synthesis. But if/when we have made an artificial mind — or settled upon a broad definition of consciousness that includes existing machines — then perhaps we’ll think of consciousness as easy! Maybe it’s everywhere already! Why pay for what’s free?

3. Degree of organization: Objects and processes that seem intricately structured are also seen as complex. This type of complexity differs strikingly from computational complexity. A string of random noise is extremely complex from an information-theoretic perspective, because it is virtually incompressible — it cannot be condensed into a simple algorithm. A book consisting of totally random characters contains more information, and is therefore more algorithmically complex, that a meaningful text of the same length. But strings of random characters are typically interpreted as totally lacking in structure, and are therefore in a sense very simple. Some measures that Lloyd associates with organizational complexity include: Fractal dimension, metric entropy, Stochastic Complexity and several more, most of which I confess I had never heard of until today. I suspect that characterizing organizational structure is an ongoing research endeavor. In a sense that’s what mathematics is — the study of abstract structure.

Consciousness seems pretty organized, especially if you’re having a good day! But it’s also the framework by which we come to know that organization exists in nature in the first place…so this gets a bit Ioopy . 🙂

Seth Lloyd ends his list with concepts that are related to complexity, but don’t necessarily have measures. These I think are particularly relevant to consciousness and, to the more prosaic world I work in: neural network modeling.

Self-organization

Complex adaptive system

Edge of chaos

Consciousness may or may not be self-organized, but it definitely adapts, and it’s occasionally chaotic.

To Lloyd’s very handy list led me also add self-organized criticality and emergence. Emergence is an interesting concept which has been falsely accused of being obscurantism. A property is emergent is if is seen in a system, but not in any constituent of the system. For instance, the thermodynamic gas laws emerge out of kinetic theory, but they make no reference to molecules. The laws governing gases show up when there is a large enough number of particles, and when these laws reveal themselves, microscopic details often become irrelevant. But gases are the least interesting substrates for emergence. Condensed matter physicists talk about phenomena like the emergence of quasiparticles, which are excitations in a solid that behave as if they are independent particles, but depend for this independence, paradoxically, on the physics of the whole object. (Emergence is a fascinating subject in its own right, regardless of its relevance to consciousness. Here’s a paper that proposes a neat formalism for talking about emergence: Emergence is coupled to scope, not level. PW Anderson’s classic paper “More is Different” also talks about a related issue: pdf )

Consciousness may well be an emergent process — we rarely say that a single neuron or a chunk of nervous tissue has a mind of its own. Consciousness is a word that is reserved for the whole organism, typically.

So is consciousness complex? Maybe…but not really in measurable ways. We can’t agree on how to describe it, we haven’t created it artificially yet, and we don’t know how it is organized, or how it emerged!

In my personal opinion many of the concepts people associate with consciousness are far outside of the scope of mainstream science. These include qualia, the feeling of what-it-is-like, and intentionality, the observation that mental “objects” always seems to be “about” something.

This doesn’t mean I think these aspects of consciousness are meaningless, only that they are scientifically intractable. Other aspects of consciousness, such as awareness, attention, and emotion might also be shrouded in mystery, but I think neuroscience has much to say about them — this is because they have some measurable aspects, and these aspects step out of the shadows during neurological disorders, chemical modulation, and other abnormal states of being.

However…

There are famous neuroscientists who might disagree. Giulio Tononi has come up with something called integrated information theory, which comes with a measure of consciousness he christened phi. Phi is supposed to capture the degree of “integratedness” of a network. I remain quite skeptical of this sort of thing — for now it seems to be a metaphor inspired by information theory, rather than a measurable quantity. I can’t imagine how we will be able to relate it to actual experimental data. Information, contrary to popular perception, is not something intrinsic to physical objects. The amount of information in a signal depends on the device receiving the signal. Right now we have no way of knowing how many “bits” are being transmitted between two neurons, let alone between entire regions of the brain. Information theory is best applied when we already know the nature of the message, the communication channel, and the encoding/decoding process. We have only partially characterized these aspects of neural dynamics. Our experimental data seem far too fuzzy for any precise formal approach. [Information may actually be a concept of very limited use in biology, outside of data fitting. See this excellent paper for more: A deflationary account of information in biology. This sums it up: “if information is in the concrete world, it is causality. If it is abstract, it is in the head.”]

But perhaps this paper will convince me otherwise: Practical Measures of Integrated Information for Time-Series Data. [I very much doubt it though.]

___

I thought I would write a short answer… but I ended up learning a lot as I added more info.