An answer I wrote to the Quora question Does the human brain work solely by pattern recognition?:

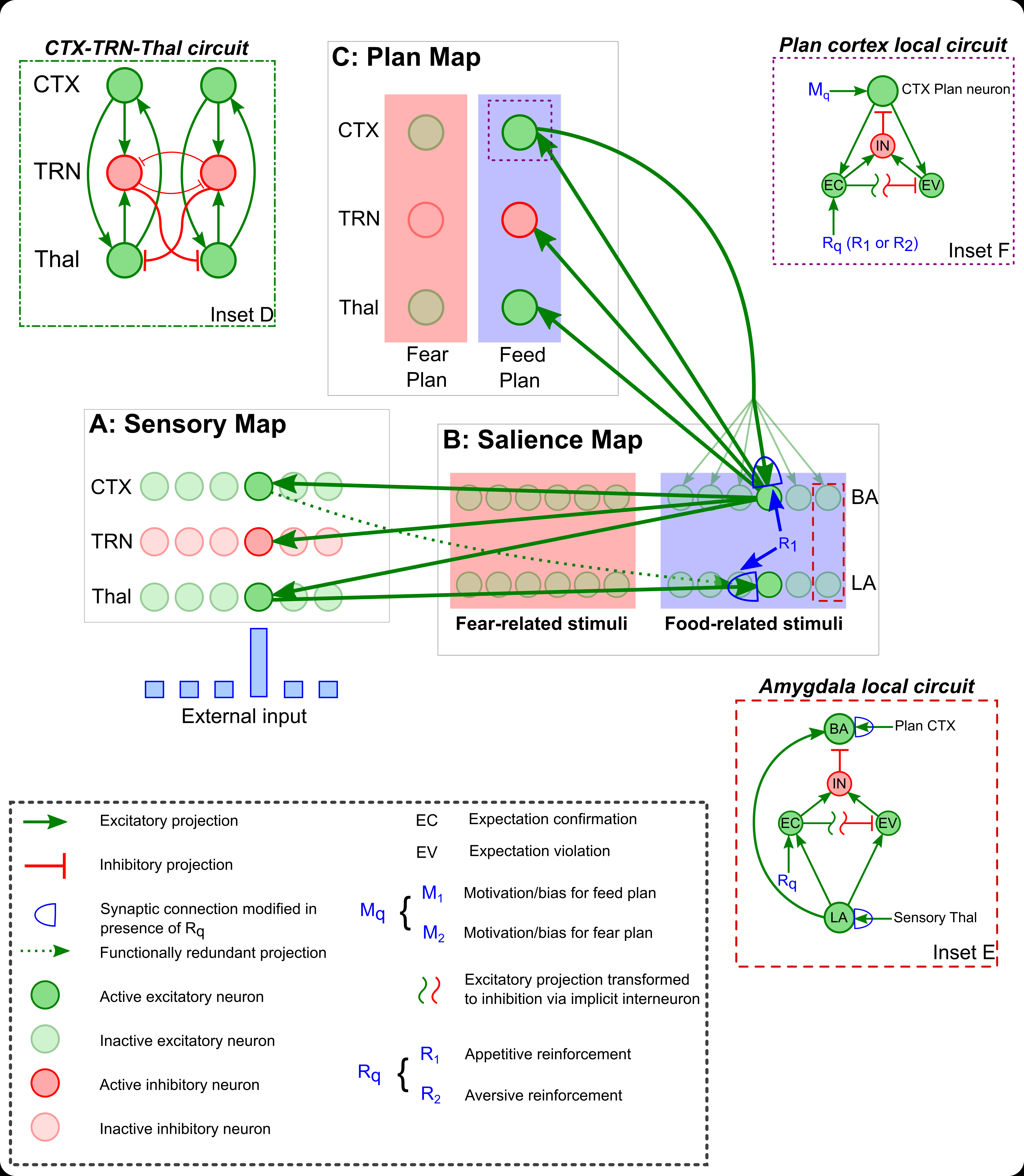

Great question! Broadly speaking, the brain does two things: it processes ‘inputs’ from the world and from the body, and generates ‘outputs’ to the muscles and internal organs.

Pattern recognition shows up most clearly during the processing of inputs. Recognition allows us to navigate the world, seeking beneficial/pleasurable experiences and avoiding harmful/negative experiences.* So pattern recognition must also be supplemented by associative learning: humans and animals must learn how patterns relate to each other, and to their positive and negative consequences.

And patterns must not simply be recognized: they must also be categorized. We are bombarded by patterns all the time. The only way to make sense of them is to categorize them into classes that can all be treated similarly. We have one big category for ‘snake’, even though the sensory patterns produced by specific snakes can be quite different. Pattern recognition and classification are closely intertwined, so in what follows I’m really talking about both.

Creativity does have a connection with pattern recognition. One of the most complex and fascinating manifestations of pattern recognition is the process of analogy and metaphor. People often draw analogies between seemingly disparate topics: this requires creative use of the faculty of pattern recognition. Flexible intelligence depends on the ability to recognize patterns of similarity between phenomena. This is a particularly useful skill for scientists, teachers, artists, writers, poets and public thinkers, but it shows up all over the place. Many internet memes, for example, involve drawing analogies: seeing the structural connections between unrelated things.

One of my favourites is a meme on twitter called #sameguy. It started as a game of uploading pictures of two celebrities that resemble each other, followed by the hashtag #sameguy. But it evolved to include abstract ideas and phenomena that are the “same” in some respect. Making cultural metaphors like this requires creativity, as does understanding them. One has to free one’s mind of literal-mindedness in order to temporarily ignore the ever-present differences between things and focus on the similarities.

Here’s a blog that collects #sameguy submissions: Same Guy

On twitter you sometimes come across more imaginative, analogical #sameguy posts: #sameguy – Twitter Search

The topic of metaphor and analogy is one of the most fascinating aspects of intelligence, in my opinion. I think it’s far more important that coming up with theories about ‘consciousness’. 🙂 Check out this answer:

Why are metaphors and allusions used while writing?

(This Quora answer is a cross-post of a blog post I wrote: Metaphor: the Alchemy of Thought)

In one sense metaphor and analogy are central to scientific research. I’ve written about this here:

What are some of the most important problems in computational neuroscience?

Science: the Quest for Symmetry

This essay is tangentially related to the topic of creativity and patterns:

From Cell Membranes to Computational Aesthetics: On the Importance of Boundaries in Life and Art

* The brain’s outputs — commands to muscles and glands — are closely linked with pattern recognition too. What you choose to do depends on what you can do given your intentions, circumstances, and bodily configuration. The state that you and the universe happen to be in constrains what you can do, and so it is useful for the brain to recognize and categorize the state in order to mediate decision-making, or even non-conscious behavior.When you’re walking on a busy street, you rapidly process pathways that are available to you. even if you stumble, you can quickly and unconsciously act to minimize damage to yourself and others. Abilities of this sort suggest that pattern recognition is not purely a way to create am ‘image’ of the world, but also a central part of our ability to navigate it.

Does the human brain work solely by pattern recognition?